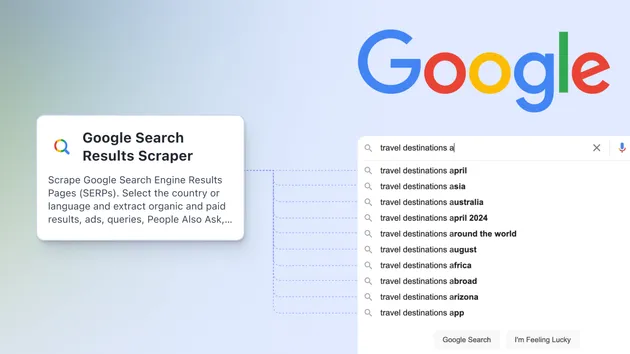

Google Search Scraper For Live App

Try for free

No credit card required

View all Actors

Google Search Scraper For Live App

lukaskrivka/google-search-live

Try for free

No credit card required

A simple version of Google Search Scraper optimized for fast response time. Don't use it unless you really need a sub-5-second response because it is less flexible and reliable.

.dockerignore

1# configurations

2.idea

3

4# crawlee and apify storage folders

5apify_storage

6crawlee_storage

7storage

8

9# installed files

10node_modules

11

12# git folder

13.git.editorconfig

1root = true

2

3[*]

4indent_style = space

5indent_size = 4

6charset = utf-8

7trim_trailing_whitespace = true

8insert_final_newline = true

9end_of_line = lf.eslintrc

1{

2 "root": true,

3 "env": {

4 "browser": true,

5 "es2020": true,

6 "node": true

7 },

8 "extends": [

9 "@apify/eslint-config-ts"

10 ],

11 "parserOptions": {

12 "project": "./tsconfig.json",

13 "ecmaVersion": 2020

14 },

15 "ignorePatterns": [

16 "node_modules",

17 "dist",

18 "**/*.d.ts"

19 ]

20}.gitignore

1# This file tells Git which files shouldn't be added to source control

2

3.DS_Store

4.idea

5dist

6node_modules

7apify_storage

8storage

9.vscode

10

11storagepackage.json

1{

2 "name": "google-search-live",

3 "version": "0.0.1",

4 "type": "module",

5 "description": "This is a boilerplate of an Apify actor.",

6 "engines": {

7 "node": ">=16.0.0"

8 },

9 "dependencies": {

10 "@apify/google-extractors": "^1.2.5",

11 "@types/express": "^4.17.17",

12 "apify": "^3.0.0",

13 "crawlee": "^3.0.0",

14 "express": "^4.18.2"

15 },

16 "devDependencies": {

17 "@apify/eslint-config-ts": "^0.2.3",

18 "@apify/tsconfig": "^0.1.0",

19 "@typescript-eslint/eslint-plugin": "^5.55.0",

20 "@typescript-eslint/parser": "^5.55.0",

21 "eslint": "^8.36.0",

22 "ts-node": "^10.9.1",

23 "typescript": "^4.9.5"

24 },

25 "scripts": {

26 "start": "npm run start:dev",

27 "start:prod": "node dist/main.js",

28 "start:dev": "ts-node-esm -T src/main.ts",

29 "build": "tsc",

30 "lint": "eslint ./src --ext .ts",

31 "lint:fix": "eslint ./src --ext .ts --fix",

32 "test": "echo \"Error: oops, the actor has no tests yet, sad!\" && exit 1"

33 },

34 "author": "It's not you it's me",

35 "license": "ISC"

36}tsconfig.json

1{

2 "extends": "@apify/tsconfig",

3 "compilerOptions": {

4 "module": "ES2022",

5 "target": "ES2022",

6 "outDir": "dist",

7 "noUnusedLocals": false,

8 "lib": ["DOM"]

9 },

10 "include": [

11 "./src/**/*"

12 ]

13}.actor/Dockerfile

1# Specify the base Docker image. You can read more about

2# the available images at https://crawlee.dev/docs/guides/docker-images

3# You can also use any other image from Docker Hub.

4FROM apify/actor-node:16 AS builder

5

6# Copy just package.json and package-lock.json

7# to speed up the build using Docker layer cache.

8COPY package*.json ./

9

10# Install all dependencies. Don't audit to speed up the installation.

11RUN npm install --include=dev --audit=false

12

13# Next, copy the source files using the user set

14# in the base image.

15COPY . ./

16

17# Install all dependencies and build the project.

18# Don't audit to speed up the installation.

19RUN npm run build

20

21# Create final image

22FROM apify/actor-node:16

23

24# Copy just package.json and package-lock.json

25# to speed up the build using Docker layer cache.

26COPY package*.json ./

27

28# Install NPM packages, skip optional and development dependencies to

29# keep the image small. Avoid logging too much and print the dependency

30# tree for debugging

31RUN npm --quiet set progress=false \

32 && npm install --omit=dev --omit=optional \

33 && echo "Installed NPM packages:" \

34 && (npm list --omit=dev --all || true) \

35 && echo "Node.js version:" \

36 && node --version \

37 && echo "NPM version:" \

38 && npm --version \

39 && rm -r ~/.npm

40

41# Copy built JS files from builder image

42COPY /usr/src/app/dist ./dist

43

44# Next, copy the remaining files and directories with the source code.

45# Since we do this after NPM install, quick build will be really fast

46# for most source file changes.

47COPY . ./

48

49

50# Run the image.

51CMD npm run start:prod --silent.actor/actor.json

1{

2 "actorSpecification": 1,

3 "name": "google-search-live",

4 "title": "Project Cheerio Crawler Typescript",

5 "description": "Crawlee and Cheerio project in typescript.",

6 "version": "0.0",

7 "meta": {

8 "templateId": "ts-crawlee-cheerio"

9 },

10 "dockerfile": "./Dockerfile"

11}src/handle-request.ts

1import { Actor, log } from 'apify';

2// @ts-ignore

3import { extractResults } from '@apify/google-extractors';

4import { gotScraping } from 'got-scraping';

5

6const tryGetHtml = async (context: any): Promise<string | null> => {

7 const { url, proxyGroup } = context;

8 // Give it 3 tries

9 for (let i = 0; i < 3; i++) {

10 try {

11 const password = process.env.APIFY_PROXY_PASSWORD;

12 const groupString = proxyGroup ? `,groups-${proxyGroup}` : '';

13 const { body, statusCode } = await gotScraping({

14 url,

15 proxyUrl: `http://country-us${groupString}:${password}`

16 + `@proxy.apify.com:8000`,

17 });

18 if (statusCode !== 200) {

19 throw new Error(`Status code ${statusCode}`);

20 }

21 return body;

22 } catch (e) {

23 log.warning(`Failed to get HTML from ${url} on retry ${i} with error: ${e}`);

24 }

25 }

26 return null;

27}

28

29export const handleRequest = async (context: any): Promise<any | null> => {

30 const { url, saveHtml, saveHtmlToKeyValueStore } = context;

31

32 const body = await tryGetHtml(context);

33

34 if (!body) {

35 return null;

36 }

37

38 const data = {

39 url,

40 ...await extractResults(body),

41 };

42

43 if (saveHtml) {

44 data.html = body;

45 }

46

47 if (true || saveHtmlToKeyValueStore) {

48 const key = `${Math.random()}.html`;

49 await Actor.setValue(key, body, { contentType: 'text/html' });

50 data.htmlSnapshotUrl = `https://api.apify.com/v2/key-value-stores/${Actor.getEnv().defaultKeyValueStoreId}/records/${key}`;

51 }

52

53 return data;

54};src/main.ts

1import { Actor, log } from 'apify';

2import { sleep } from 'crawlee';

3import express from 'express';

4

5import { handleRequest } from './handle-request.js';

6

7// Initialize the Apify SDK

8await Actor.init();

9

10const app = express();

11

12// For simple requests

13app.get('/', async (req, res) => {

14 const start = Date.now();

15 const {

16 q,

17 proxyGroup = 'RESIDENTIAL',

18 num = 10,

19 saveHtml = false,

20 saveHtmlToKeyValueStore = false,

21 } = req.query as any;

22 if (!q) {

23 res.status(400).send({ error: 'Missing query', results: [] });

24 return;

25 }

26

27 log.info(`Received request for query: ${q}`);

28 const protocol = proxyGroup.startsWith('GOOGLE') ? 'http' : 'https';

29 const result = await handleRequest({

30 url: `${protocol}://www.google.com/search?q=${q}&num=${num}`,

31 proxyGroup,

32 saveHtml,

33 saveHtmlToKeyValueStore,

34 });

35 const timeSecs = ((Date.now() - start) / 1000).toFixed(2);

36 if (!result) {

37 log.warning(`Failed to get results for query: ${q} in ${timeSecs} seconds`);

38 res.status(503).send({ error: 'Failed to get results', results: [] });

39 return;

40 }

41 log.info(`Returning results for query: ${q} in ${timeSecs} seconds`);

42 res.send({ error: null, results: [result] });

43});

44

45// For requests with parameters

46app.post('/', (_req, res) => {

47 res.send('POST endpoint not implemented yet.');

48});

49

50const port = process.env.APIFY_CONTAINER_PORT || 3000;

51const url = process.env.APIFY_CONTAINER_URL || 'http://localhost';

52

53app.listen(port, () => {

54 console.log();

55 log.info(`Server started on URL+port: ${url}:${port}`);

56 console.log();

57});

58

59await sleep(99_999_999);Developer

Maintained by Community

Actor metrics

- 1 monthly user

- 1 star

- Created in Mar 2023

- Modified over 1 year ago

Categories

Lukáš Křivka

Lukáš Křivka